Many organizations begin their AI journey with powerful ideas and impressive Pilot phase, but when data expands into petabytes, when latency requirements drop to milliseconds, and when deployment enters the labyrinth of enterprise security, the “lab-grown” AI often withers. The key difference between AI experimentation and AI at scale often comes down to one critical factor: “Cloud Architecture.”

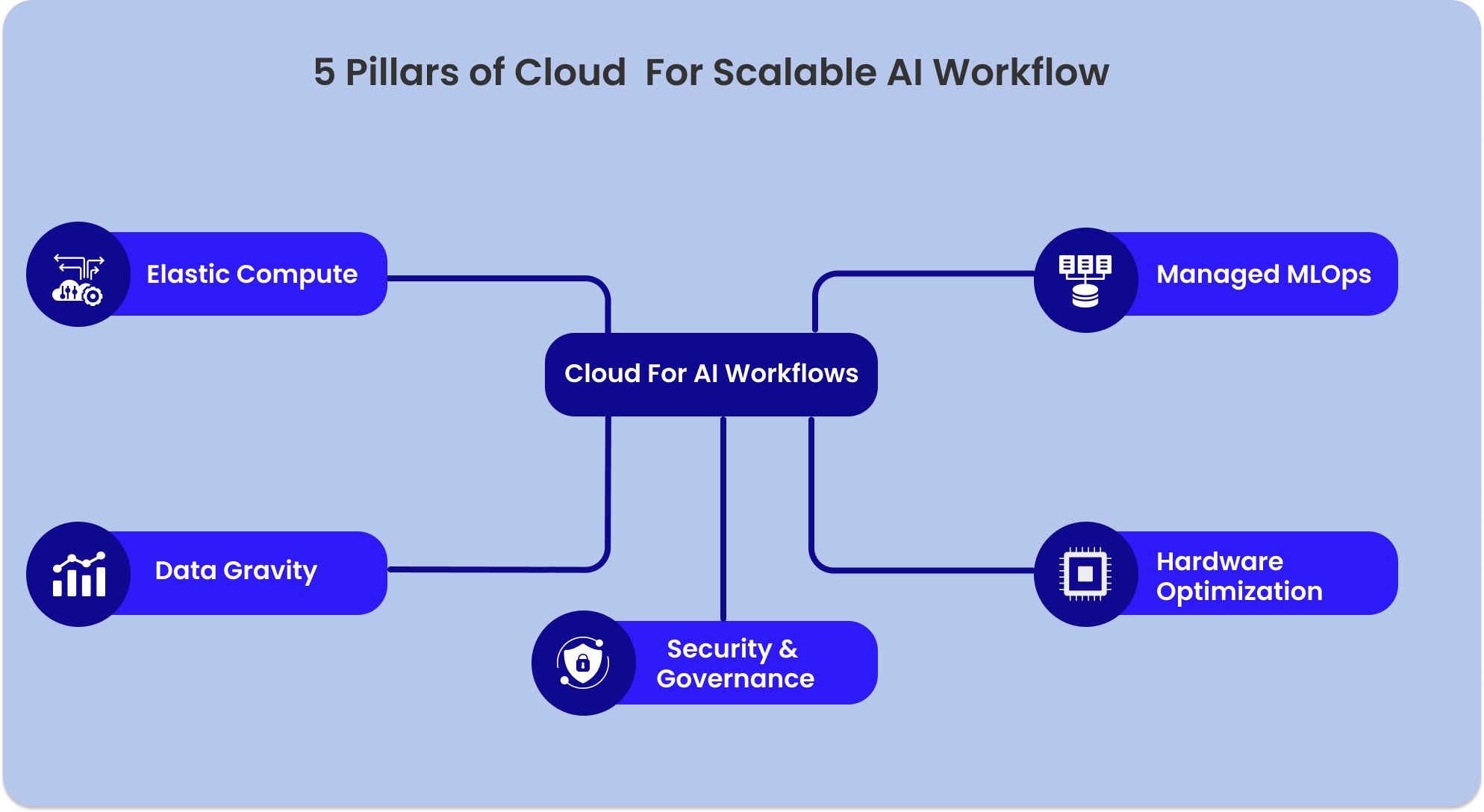

Cloud is not just about hosting, it is the operating model that enables AI workflows to scale and adapt securely. It transforms AI from a series of disconnected scripts into a living, breathing ecosystem. For a transition from the existing phase to a functional Agentic Enterprise, organizations must build upon five foundational pillars that make their cloud indispensable.

Pillar 1: Elastic Compute:

According to Gartner’s 2026 Strategic Technology Trends, the rise of AI Supercomputing Platforms has made elastic infrastructure a non-negotiable requirement for enterprises hoping to scale. With elastic compute, your AI workload can expand upto 100 GPUs to complete a heavy training task in an hour and shrink back to zero the moment it’s done. You pay only for what you use and exactly when you use it.

Adding to that, Google Cloud’s ROI of AI 2025 report also reveals that 88% of “agentic AI” early adopters are already seeing positive returns, primarily by leveraging this flexibility to move from experimental pilots to production. In local setups, if a model demands more memory than available, systems crash. In the cloud, the infrastructure adapts automatically through AI-powered FinOps, which is now essential for managing the “inference economics” of large-scale deployments.

This elasticity allows teams to:

- Train large models faster by bursting into massive GPU clusters on demand.

- Run experiments without hardware constraints, fostering a culture of rapid innovation.

- Optimize unit economics by scaling down instantly, ensuring costs align with business value.

Pillar 2. Data Gravity

In AI, data is heavy. Moving massive datasets from on-premise environments to remote servers consumes excessive time, bandwidth, and money. This phenomenon, known as Data Gravity, describes the idea that as data sets grow larger, they become harder to move, naturally attracting applications and services toward them. Data transfer delays are one of the biggest silent productivity killers for AI teams today.

Cloud architecture solves this by co locating compute with data. Instead of moving terabytes back and forth across a narrow pipe, you bring the AI model (the “brain”) to where the data (the “library”) already lives.

“Data has gravity. As a data set grows in size, it becomes increasingly

difficult and expensive to move. To maximize the performance of AI, you

must move the compute to the data, not the other way around.”

— Dave McCrory, (the technology executive who coined the term Data Gravity.)

As models become more complex and datasets reach petabyte scales, the laws of physics and economics like latency elimination, cost efficiency and the iteration loop, begin to apply more strictly to IT architecture as well.

When data gravity is respected, innovation accelerates. By centralizing your “library” in an elastic cloud environment and deploying your “brains” directly within that same perimeter, you achieve:

- Near-instantaneous data access for real-time inference.

- Drastically reduced transfer costs and operational overhead.

- Maximum developer productivity, as teams spend more time tuning models and less time waiting for progress bars.

Pillar 3. Managed MLOps

In the enterprise, MLOps is the ecosystem where deploying, versioning, monitoring, and retraining the models happen.Without the automation of this ecosystem, the “Data Gravity” advantage is lost to operational friction. Engineers find themselves spending up to 80% of their time managing infrastructure rather than improving intelligence. Manual version updates, environment mismatches, and silent pipeline failures often lead to “Model Decay,” where AI performance drifts as the real world changes.

Managed cloud services act as a digital foreman, automating the heavy lifting so your experts can focus on the key aspects of the story:

- Automated Training Pipelines: Drastically reducing the time from data ingestion to model readiness.

- Version Control & Reproducibility: Ensuring every model version can be tracked back to the exact dataset and code used to create it which is a must for regulatory compliance.

- Continuous Monitoring & Observability: Detecting “drift” the moment your model begins to lose accuracy in the real world.

- Automated Retraining: Seamlessly updating models with fresh data without manual intervention.

- Seamless Deployment: Moving models from a developer’s notebook to a global API with a single click.

Pillar 4. Hardware Optimization:

Without hardware optimization, scaling an AI strategy quickly leads to “bill shock” and runaway operational expenses. Also, AI workflows aren’t monolithic and they have distinct stages, each requiring a different “engine”:

- Training : This requires massive, interconnected GPU clusters to process trillions of parameters.

- Inference : Once a model is trained, “serving” it to users requires far less power. Using specialized inference chips (like AWS Inferentia or Google TPUs) can reduce costs by up to 70% compared to standard GPUs.

- Lightweight Logic: Simple data preprocessing or basic classification often runs most efficiently on high-frequency CPUs, saving the expensive silicon for the heavy math.

The cloud allows you to be an architectural chameleon. You can train on high-performance infrastructure, then instantly deploy the resulting model onto low-cost, optimized hardware. This flexibility is the secret to Inference Economics, a concept growing in importance as usage scales.

“As AI moves from research to production, the focus shifts from training

performance to inference efficiency. The organizations that win will be

those that can optimize their ‘cost-per-prediction’ by matching the

workload to the most efficient silicon available.”

— Jensen Huang, CEO of NVIDIA.

By leveraging a cloud-native, heterogeneous hardware strategy, enterprises achieve:

- Granular Cost Control: Paying for millisecond increments of specialized compute rather than idle, expensive hardware.

- Performance Optimization: Ensuring low latency for end-users by using hardware specifically tuned for rapid response times.

- Sustainable Scaling: Building a foundation where adding the millionth user doesn’t cost as much as the first.

Pillar 5: Security & Governance

No organization can afford to have sensitive customer data, medical records, or proprietary trade secrets accidentally “leaked” into public model training sets. Cloud environments solve this by treating security not as an afterthought, but as the foundational layer of the architecture. Through constructs like Virtual Private Clouds (VPCs), your AI operates in a digitally fenced-off “clean room,” ensuring that your data and the models learning from it never leave your controlled perimeter. By leveraging cloud-native security, organizations can enforce strict governance without slowing down innovation:

- Private Data Silos: Your proprietary data is used to fine-tune your specific model instances, with a “hard wall” preventing that information from ever reaching the public provider’s base models.

- Identity & Access Management (IAM): Granular controls ensure that only authorized developers and specific automated “Skills” can access sensitive datasets.

- End-to-End Encryption: Data remains encrypted both “at rest” (in the library) and “in transit” (as it travels to the brain).

By respecting these governance boundaries, enterprises achieve:

- Zero-Leakage Assurance: Total confidence that private data stays private.

- Regulatory Resilience: The ability to meet evolving AI laws and compliance requirements effortlessly.

- Governance at Scale: Centralized control over every model, skill, and dataset, ensuring the organization’s risk management goals are always met.

Conclusion

The transition from AI experimentation to industrial-scale deployment necessitates a fundamental shift in architecture, positioning the cloud as the definitive operating system for the modern enterprise. By harmonizing elastic compute for agility, data gravity for localized efficiency, managed MLOps for operational stability, and hardware optimization for fiscal sustainability, organizations create a robust framework where security and governance are native, rather than elective.

As we look toward the immediate horizon, the “cloud front” will evolve beyond mere infrastructure into an autonomic orchestrator, characterized by self-optimizing “Agentic Clouds” that dynamically reconfigure silicon and data pathways in real-time. The future belongs to the organizations that view these five pillars not as individual technical choices, but as a unified, sovereign foundation for responsible and limitless innovation.