Modernizing Retail Data Architecture for Scalable, SLA-Driven Reporting

A leading US retail organization with a large and diverse product portfolio, had long relied on a collection of fragmented data systems to manage its sales orders and inventory. Over time, what began as a manageable setup had grown into a web of inconsistent reports, scattered metrics, and limited cost visibility, making it increasingly difficult for the business to trust its own data. To address this, we partnered with the client to reimagine their data foundation from the ground up. A modernized, layered data architecture was implemented across staging, transformation, and reporting layers, bringing structure, accuracy, and scalability to an environment that had long been held back by its technical debt. The result was a platform built to solve today’s reporting challenges and to grow confidently alongside the business.

The Challenges

Before the transformation, Client’s data environment was riddled with compounding inefficiencies. Each issue fed into another and created a cycle that made reliable, timely reporting feel out of reach.

- Fragmented KPI Definitions Across Environments: Business KPIs were distributed across multiple environments, leading to inconsistent metric calculations and a lack of any single source of truth. Governance was difficult to enforce, and different teams were often working from different versions of the same number.

- Proliferation of Stored Procedures per Reporting Use Case: Over the years, multiple report-specific stored procedures had accumulated across the system. This tight coupling between data processing and reporting led to significant code redundancy and made the environment increasingly difficult to maintain or evolve.

- Decentralized Legacy Business Logic: Transformation logic had been implemented piecemeal across various layers and systems. Business rules were duplicated, outputs were inconsistent, and tracing data lineage from source to report had become a near-impossible exercise.

- ETL Pipeline Performance Degradation and SLA Breach: The reporting system was failing to meet defined SLAs. The introduction of Slowly Changing Dimensions (SCD Type 2) and data retention and purge mechanisms had significantly increased ETL processing times, causing prolonged batch execution cycles that the existing infrastructure simply couldn’t absorb.

- Delayed Data Availability for Reporting: The extended ETL durations had a direct downstream impact on Power BI semantic models, causing latency in report availability and reduced data freshness for business users who depended on timely insights to make decisions.

- Inefficient Deployment and Release Management: Without a standardized CI/CD pipeline in place, code migration across environments was done manually. Deployments were error-prone, time-consuming, and difficult to track or version.

The Solution

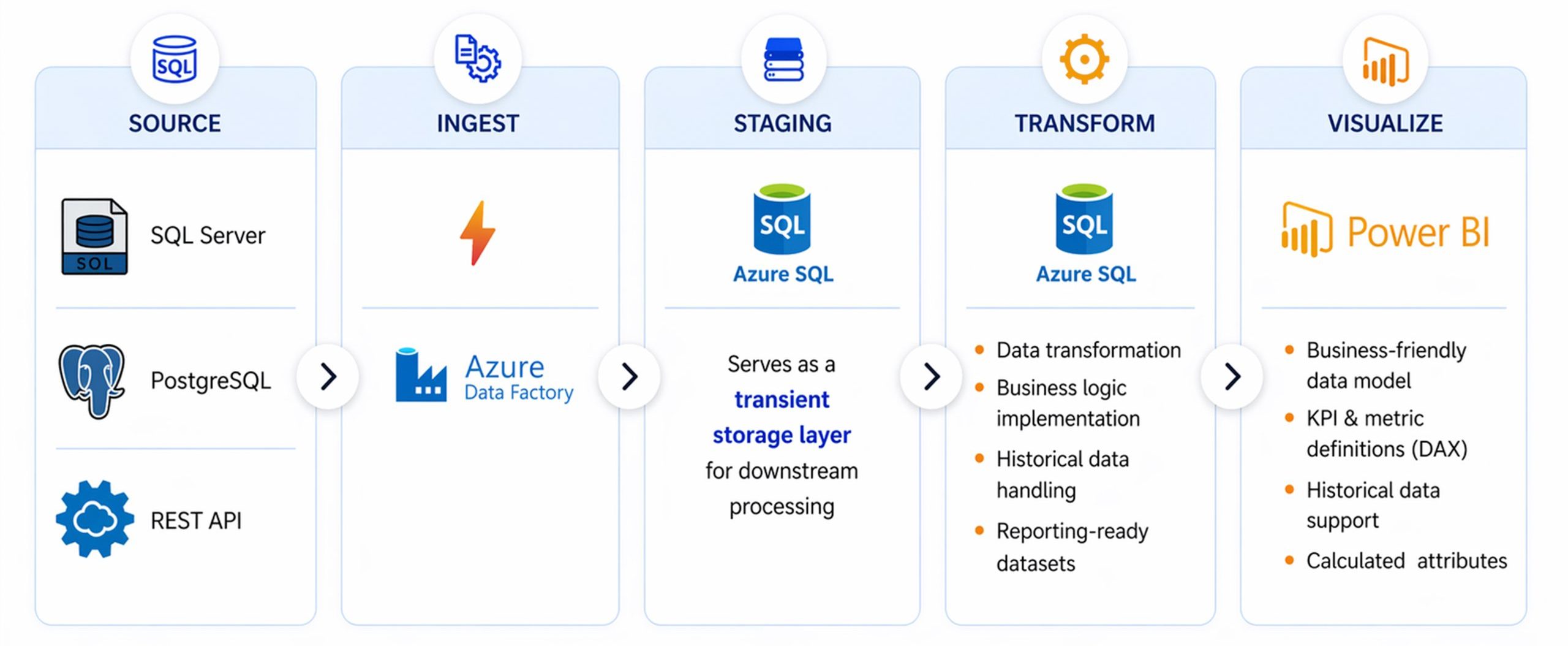

We took a holistic, architecture-first approach to re-engineering the client’s data ecosystem. Rather than patching individual pain points, the team designed a coherent, layered platform where each component reinforces the others from ingestion all the way through to visualization.

Solution Architecture

- Unified KPI Reporting Layer: Sales and inventory KPIs were standardized within a common reporting layer, establishing cross-domain consistency that all teams could rely on regardless of which report or system they were working from.

- Centralized Semantic Model: A Power BI Tabular Model with DAX-based KPIs was implemented to serve as the governed, single source of truth. This gave business users a reliable, consistent foundation for every metric and report they produced.

- Modular ETL/ELT Framework: Stored procedures were refactored into reusable, parameterized data pipelines. This eliminated redundancy, reduced maintenance overhead, and gave the team a scalable framework for adding new reporting needs without creating new technical debt.

- Centralized Business Logic Layer: Transformation rules were consolidated into a dedicated processing layer, ensuring consistent logic across all data flows and dramatically improving data lineage making it possible to trace any number back to its source.

- Layered Data Architecture (Azure SQL): An Azure SQL layered model spanning Staging (STG), Core (DBO), and Reporting (RPT) layers was adopted. This structured separation of concerns brought order to the data flow and made each layer independently manageable and auditable.

- ETL Orchestration via Azure Data Factory: Azure Data Factory was leveraged for scheduling, orchestration, and pipeline management providing a robust, centrally controlled engine for all data movement across the environment.

- Secure Data Integration with Microsoft Entra ID: The architecture was integrated with Microsoft Entra ID to enable API-based data ingestion with secure, governed access control ensuring that sensitive data was handled safely at every point in the pipeline.

- CI/CD Pipeline Enablement: Automated deployment pipelines were implemented to bring consistency, speed, and control to every release thus eliminating the manual, error-prone processes that had previously slowed the team down.

Key Improvements

The architectural changes delivered measurable improvements across the entire data lifecycle. SCD and purge logic were optimized to meaningfully reduce processing time, while ETL workflows were refactored to eliminate the bottlenecks that had previously caused SLA breaches. Incremental data loads replaced costly full refreshes wherever possible, further accelerating processing cycles. The new CI/CD pipelines enabled consistent and faster releases, and a streamlined data flow into the reporting layer allowed business users to access insights faster than ever before.

Business Impact

The transformation delivered meaningful, measurable outcomes across the business touching everything from daily operations to long-term decision-making.

| Impact Area | Outcome |

|---|---|

| On-time Reporting | Reports are now consistently delivered within SLA timelines, giving business users reliable access to the insights they need, when they need them. |

| Faster Processing | Significant reductions in ETL and refresh duration mean the data pipeline no longer acts as a bottleneck to the business. |

| Reliable Data Access | Improved availability and stability across the reporting environment means users can depend on the data being there when they need it. |

| Seamless Deployments | Automated, faster, and error-free releases have replaced the manual migration processes that previously introduced risk with every update. |

| Better Decisions | Accurate and timely insights are now driving better business outcomes across the Client’s operations. |

| Trusted Data | Consistent KPIs across all reports and teams have established a single source of truth that the entire organisation can rely on. |

| Operational Efficiency | Faster, more reliable data supports daily business operations and reduces the time spent chasing or reconciling numbers. |

| Reduced Risk | Controlled, governed processes have minimised the risk of reporting errors and the downstream business decisions they could affect. |

| Business Agility | The new architecture makes it far easier to adapt quickly to changing reporting needs, without the rework that previously made change so costly. |

| Cost Efficiency | Optimised resource usage and reduced manual effort have meaningfully lowered the operational cost of running the Client’s data environment. |

Conclusion

This use case is a clear illustration of what becomes possible when an organization commits to getting its data foundation right. By moving away from a fragmented, procedure-heavy environment toward a standardized, layered architecture with a centralized semantic model, the business gained something it had long struggled to achieve: consistent KPIs, faster processing, and reporting that reliably meets its SLAs.

Beyond the technical improvements, the real impact has been felt in the way the business operates day-to-day with greater confidence in its data, more time spent on decisions rather than data reconciliation, and a platform that is genuinely built to scale. This is the kind of transformation Emergere delivers.